Study finds many researchers believe AI could cause nuclear-level catastrophe: Russell Wald

Russell Wald, director of the Stanford Institute for Human-Centered AI, sounds off on 'The Story.'

After a Stanford survey of researchers showed more than a third believe that AI (artificial intelligence) could cause a "nuclear-level catastrophe," one of the directors of the California college's Institute for Human-Centered Artificial Intelligence said another key to that fear is deciphering what values system the human-inputted but self-determining bots will hold.

"I think that there is a little bit of overwhelming concern by a minority of people who are developing this technology. And one part of that is the concern that this technology will spin out of control and out of our hands," said Russell Wald, the institute's managing director for policy and society, said Monday on "The Story with Martha MacCallum."

"I think that is a probably fairly limited subset. But the bigger issue here I think we need to look at is who has a seat at this table -- and ensuring that we have a more diverse set of people at the table so that we can get out of this hysteria a little bit."

Wald said that at the current moment when entities like ChatGPT and others are developing hyper-intelligence, it is important to have a handle on whether the developer has humankind's best interests in mind.

STANFORD RESEARCHERS CREATE MINI WESTWORLD SIMULATION

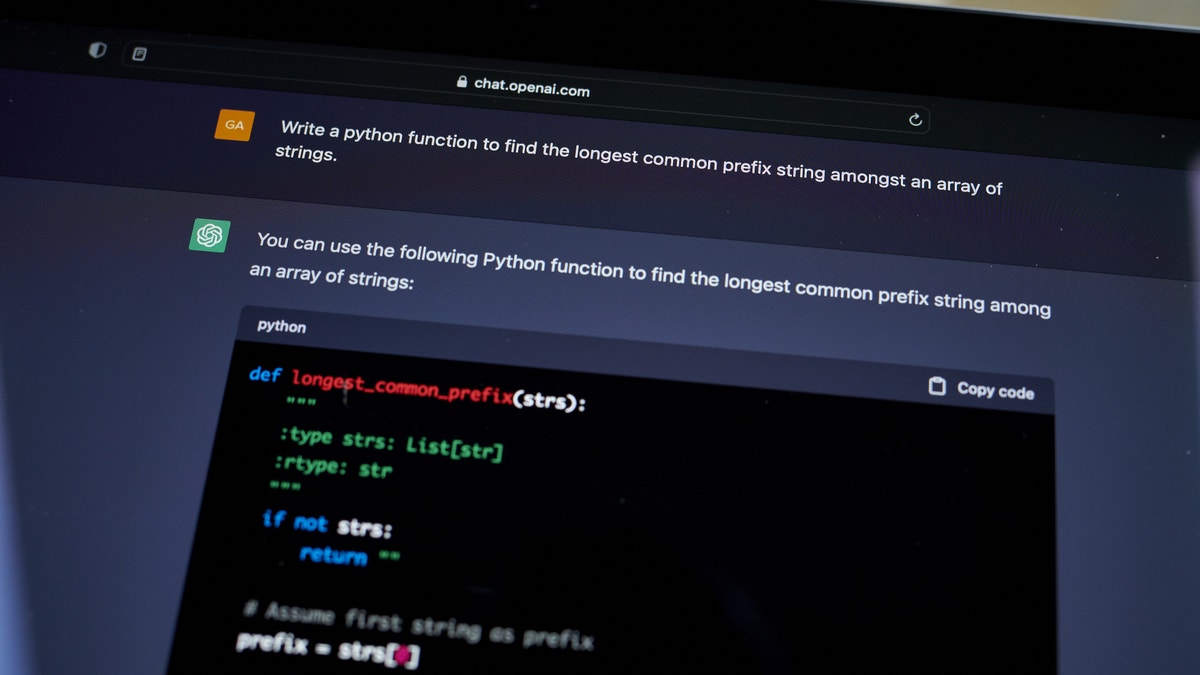

The ChatGPT chat screen is seen open on a laptop computer. (Gabby Jones/Bloomberg via Getty Images)

At present, he said only China and the United States have entities with that ability, with the inference being the fact both countries have radically different foundations and values systems.

"Under that, the question is whose values are at the table? Is it Silicon Valley's and Beijing's or is it the rest of the country?" he said.

"And if we want the rest of the country at the table, we're going to have to provide a level of compute infrastructure to make it available for other stakeholders to be here and to have a more balanced and thoughtful view about this."

Anchor Martha MacCallum replied that Google executive Sundar Pichai reportedly spoke out in favor of AI entities reflecting American and Western values, showing that it is a legitimate concern how AI will respond in subjective terms to its objective outputs.

AI EXPERT ALARMED AFTER CHAT-GPT DEVISES PLAN TO ‘ESCAPE’

The OpenAI logo on a laptop computer arranged in the Brooklyn borough of New York, US, on Thursday, Jan. 12, 2023. Microsoft Corp. is in discussions to invest as much as $10 billion in OpenAI, the creator of viral artificial intelligence bot ChatGPT, according to people familiar with its plans. (Gabby Jones/Bloomberg via Getty Images)

In a preview segment for Tucker Carlson's wide-ranging interview with Elon Musk airing Monday at 8 PM ET on Fox News, the Tesla and Twitter CEO affirmed the host's concern that some developers are teaching AI to "lie."

"That would be ironic," Musk replied. "But faith, the most ironic outcome, is most likely, it seems."

Wald said he doesn't believe AI is being trained to lie, but instead that there is "overconfidence" in the data systems it is being trained on – in that they will begin to invent context and create its own responses.

"That is a concern. And it's this emergent area that we're starting to study and have to understand a little bit more as to why that's happening," he said. "And a good part of that is the data."

"The other part of this is that we need to ensure that the right values are at this and that we have a diverse set of views so that we don't have this just cultural-relativist side, but we actually have a full diversity of views that are coming through on some of this technology."